Everybody that has an interest in influencing public opinion will happily pay a handful of Dollars to amplify their voices. Governments, political groups, corporations, traders, and just simple plain trolls will continue to shout through bot armies—as long as it is so cheap. Bots are cheaper than buying ad space, less risky than a network of spies, more efficient and less prone to failure than creating 50 fake accounts by hand. If bots could be identified and tagged, the fake news industry would suffer a heavy blow. Here is how we can make this happen.

Did Russia tamper with the US election? Of course. It’s so easy. Why wouldn’t they? And so have the Chinese, 4chan, the Republicans and the Democrats. Of course, Net Neutrality opponents send in bots to make themselves appear like they matter. The weapons industry, the oil industry, finance, tobacco, and the top 1%… Everybody that is unpopular but has the means will jump on the opportunity to make themselves heard.

If you are not part of the powerful minority trying to appear democratically relevant, you probably agree that Information Technology has a bot problem. It’s not just the Russians that engage in the manipulation of public discourse. Everybody that wants to amplify their propaganda uses bots. It’s time to put an end to it. Science fiction author Isaac Asimov has defined three rules for Robots:

1–A robot may not injure a human being or, through inaction, allow a human being to come to harm.

2–A robot must obey the orders given it by human beings except where such orders would conflict with the First Law.

3–A robot must protect its own existence as long as such protection does not conflict with the First or Second Laws.

Today, information robots are hidden behind a screen, programmed to make us believe they are humans. This calls for a fourth rule. Popular culture has a great answer for that: “どうもありがとうミスターロボット, 秘密を知りたい.” It’s slightly encoded in Japanese. It says: “Thank you robot, we want to know your secret.” What secret?

The time has come at last (secret, secret I’ve got a secret)

To throw away this mask (secret, secret I’ve got a secret)

Now everyone can see (secret, secret I’ve got a secret)

My true identity…

To make sure that robots follow the first three rules, we need to make sure whom we are interacting with: Robots must be identifiable.

Facebook vs Twitter

Bots are not only a problem on social media and they are not the only problem we have with Social Media. But social media has taken a center stage in filtering news and forming public opinion and within social media, bots are a core amplifier that needs to be addressed first.

Facebook recognized that they play a role in forming public opinion. They reacted by announcing they plan on throttling down content from news organizations. Their plan is to vet and rate news through the same logic that has gotten them in trouble in the first place: “let the people decide”. Do you hear that crackling noise? That’s 4chan logging into Facebook, waiting to vote.

While Facebook tries to patch their spam holes and tries to shirk its political responsibility for future catastrophes, Twitter addressed the bot problem head-on. They have just banned 58’000 accounts they supposed are Russian bots and informed their user base that some of the accounts they had followed were Russian bots.

Some have ridiculed Twitter for the false positives they got. And, as so often, the old “Who decides?” “What if they punish the wrong ones?” “The hackers will just get smarter” camps have thrown their same fatalist clichés at those who decide to take responsibility. Luckily, Google doesnt’ treat SEO like a lost fight.

Here is a heavy dose of practical philosophy for you: You know who decides? Those who take responsibility. And those who decide and take responsibility shape their destiny. For those who wait and see other people will decide. This is a moment where Twitter can make precious ground over a seemingly invincible Facebook.

Checkmark for bots?

So far, Twitter for focussed on Russian bots. All bots should be marked. Political bots, commercial bots, financial bots, troll bots, fun bots, help bots, sexbots, religious bots, good bad, angry, sad and scientific bots. We need to know who is talking to us and whom we are talking to. How?

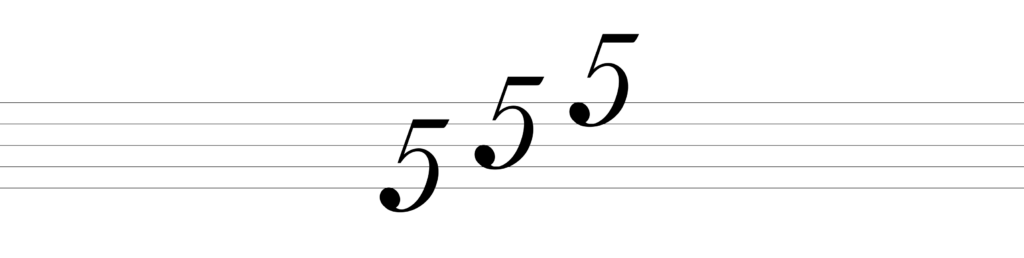

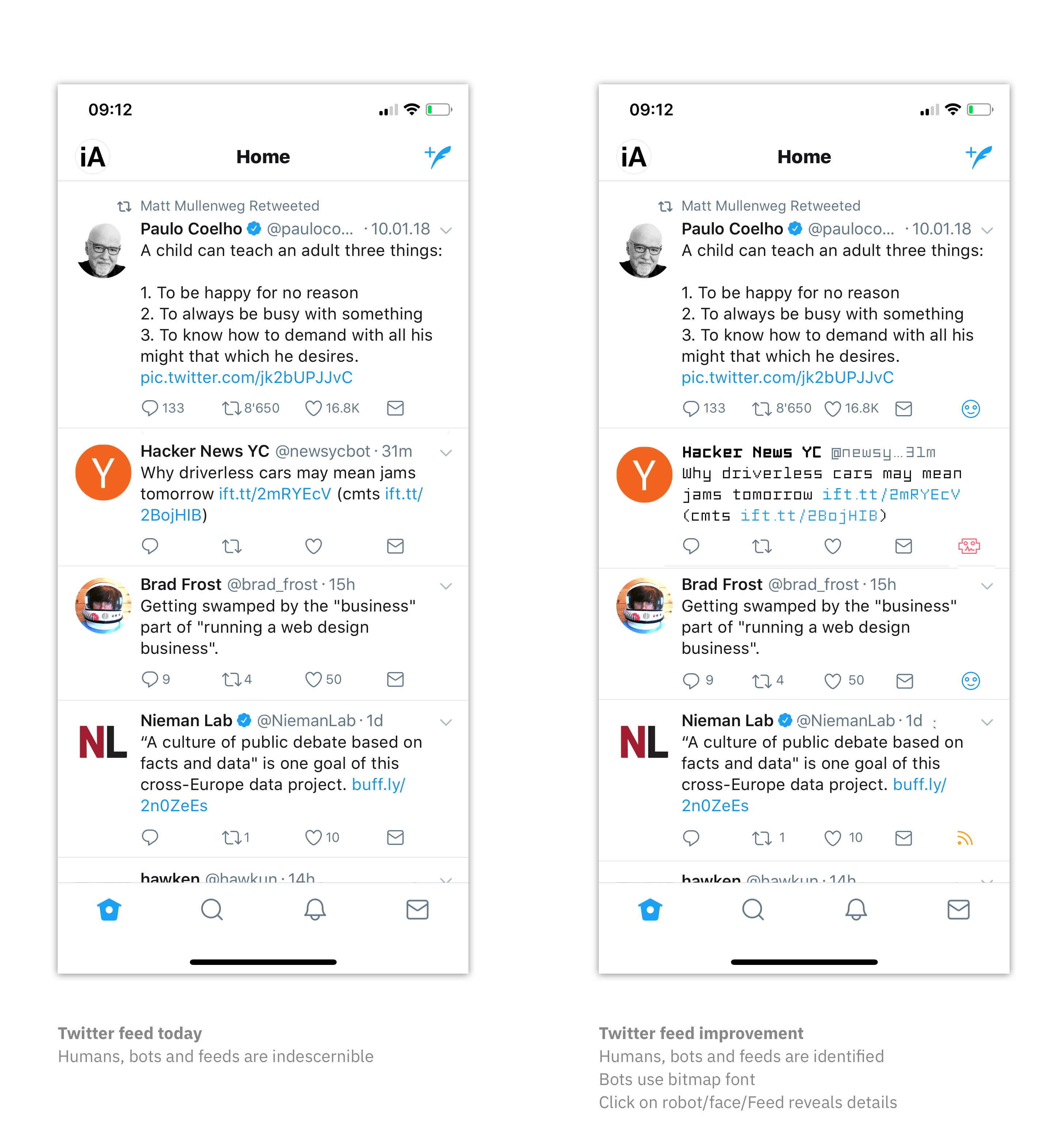

Twitter has a checkmark for confirmed accounts. How about a checkmark for bots? It’s not hard to do. On today’s Twitter, humans, bots, and feeds look identical. Adding a checkmark for bots and give them a different, robotic typography could do wonders. With this machine-like font and a checkmark, they would stand out, and machines and humans would become discernible:

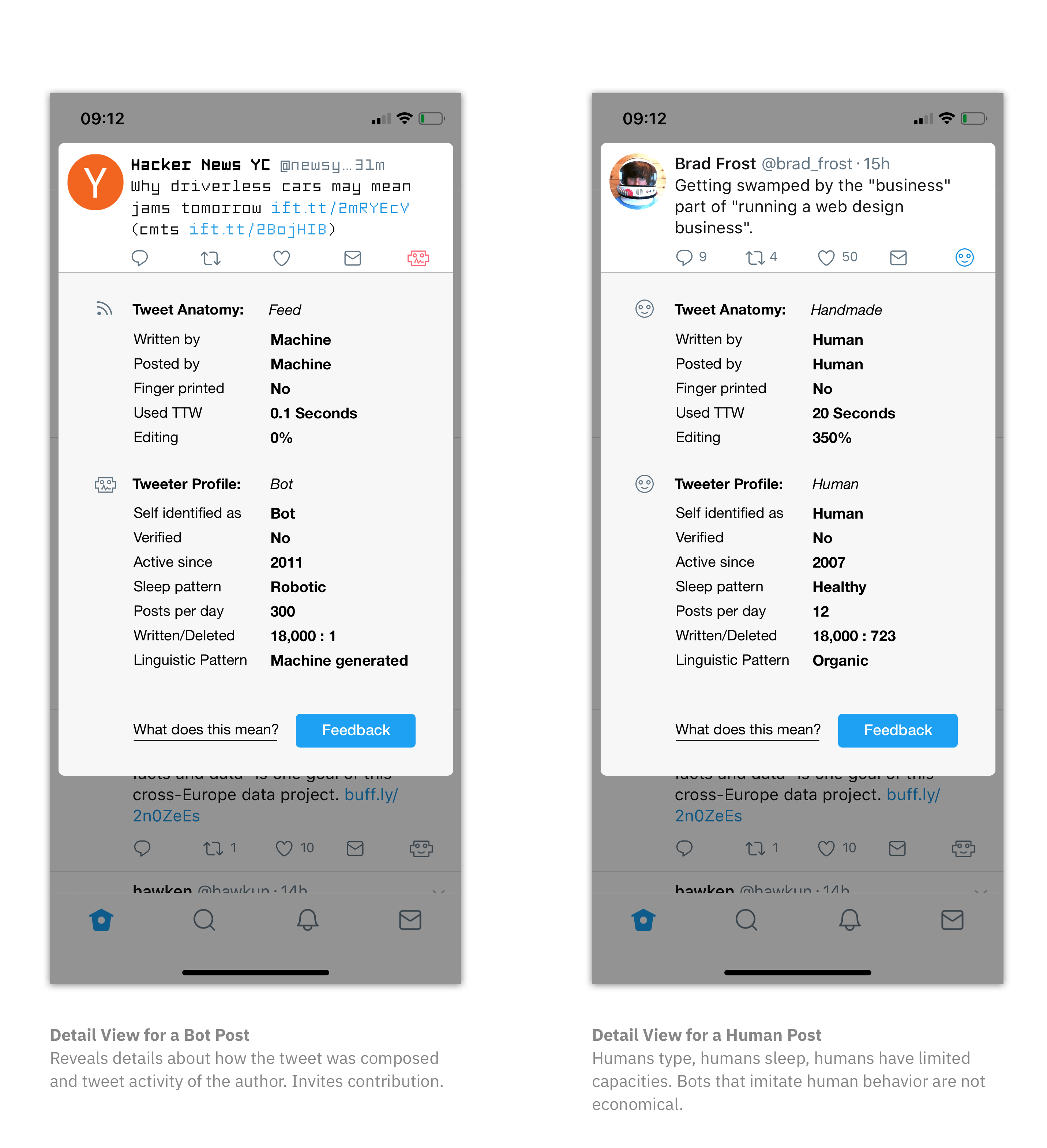

In addition to a simple identification Bot-or-Not, Twitter should offer detailed information to why it assumes a bot is a bot and a human is human. It should encourage bots, humans and feeds to self-identify:

As you can see in these sketches, there are a series of identification factors needed to automatically verify an account as being human. It is understood that any automated security can be cheated.

Make Spamming Expensive

The idea here is not to make cheating impossible. The idea is to make cheating expensive. Programming an army of bots in a system without checks and balances is economically interesting. It happens because it is cheap.

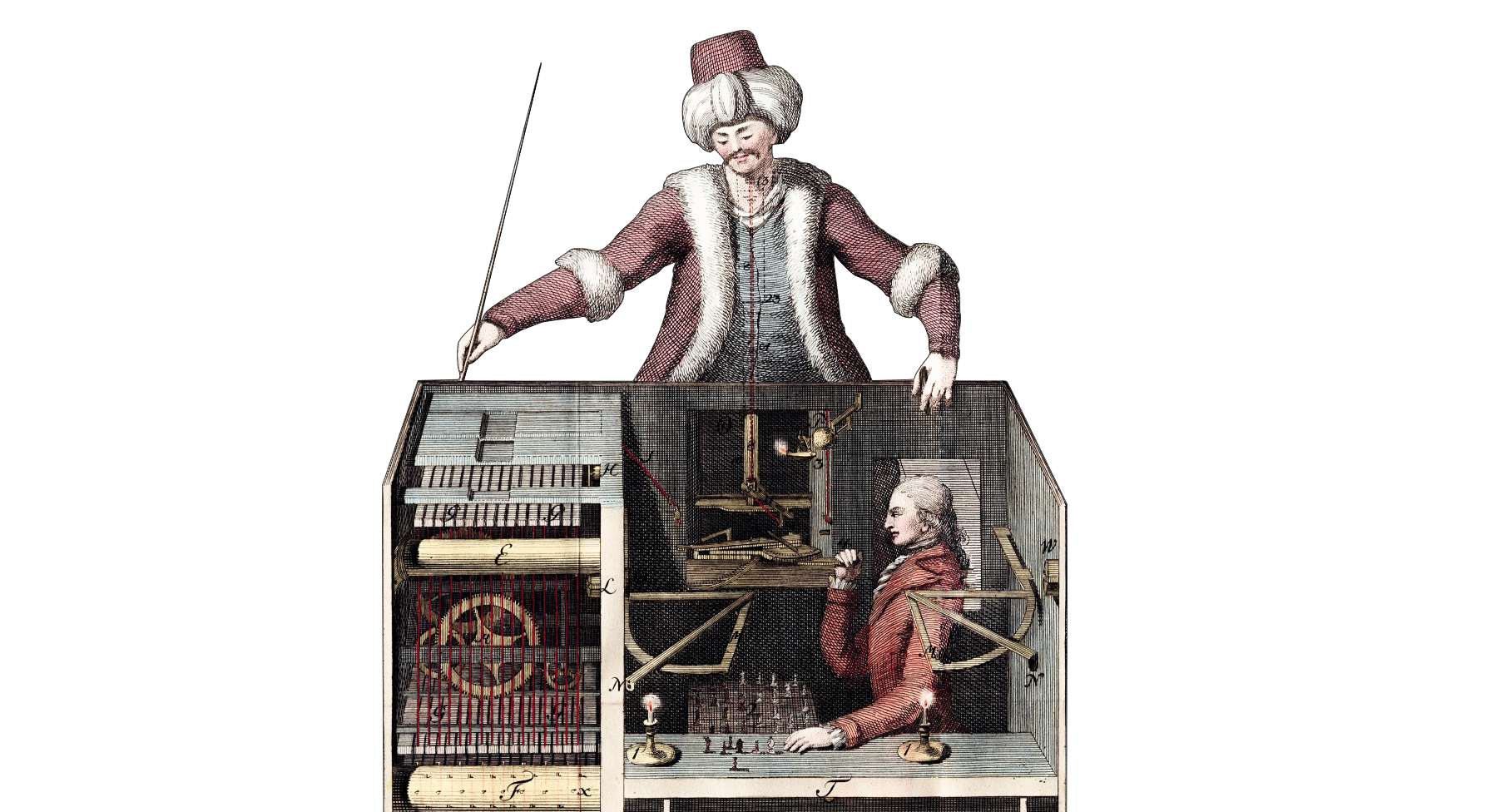

If you have to build mechanical devices that enter information, your cost per spam will go up exponentially. If you use very cheap human labor in combination with so-called persona software, sadly, you may still be in an economically viable range. But the likeliness of human error increases. Low-paid humans that are employed to manipulate information make mistakes. Humans increase the risk of a leak. And, compared to machine run bot armies, humans will always be far more expensive.

Stricter guidelines for bots will not only make it more expensive to post. It will also become more expensive when you get caught. Running a bot that pretends to be human should result in termination of the account and in restrictions for and exposure of people running these accounts. Cheating has to hurt. It has to be risky enough so cheaters get nervous and make mistakes.

How to Spot a Bot

From this relatively simple logic “make bots recognizable”, you can derive a series of checks and balances that make spamming, not impossible, but expensive. There are many ways machines can imitate us, but at some point machines imitating us become more expensive than humans cheating. Here are some requirements that will increase the cost of spamming:

Require that information was entered by hand: a) Measure if human touch is used on touch devices. b) Check how long it takes to type a message. c) Take into account how many corrections were needed to complete the message. d) Measure organic movement while editing. If done properly, it can get very expensive in terms of time and infrastructure to cheat the system.

Require that tweets are posted by hand: Check if a Tweet was posted automatically or by hand. Offer a possibility to proof the authenticity through fingerprint, face or iris scanning. Posts don’t need to be fingerprinted every time. Once in a while is enough.

Check for humane time metrics: Humans need sleep. Humans have physical limits to how much they can post within a certain time span.

Check for human error: Humans make mistakes and delete tweets. Some bots post-and-delete-post-and-delete so they look more humane. A simple tweeted vs deleted measure can serve as a guide whether an account is in the human or robotic range.

Linguistic patterns: Humans have peculiar quirks when they speak. Your language is as unique as a fingerprint. Language processing is quite advanced these days. It is mostly used to fool us, but it can as well be used to add additional hurdles for those who abuse technology.

Social Control: A feedback button offers the opportunity for human intervention in case a bot slips through the crack of auto-detection or an account gets falsely accused. The feedback process must be cleverly designed so it is even more expensive to game than the regular posting process.

Technical Fingerprinting: Twitter has your IP-number, your device ID and tons of analytics data. The same machinery using your personal data to sell you soap and toasters can be used to assess the likeliness of an account being a bot. Users that buy things are less likely to be bots. And if they buy things to appear more credible, good, then the price for spamming goes up.

This is a draft made in 10 years and an afternoon. Some of these measures can be implemented in a couple of days, others will take time. It’s is a quick draft as a present from us to Twitter. There is lots of room for improvement. The list is incomplete and we have no doubt that the Twitter design team will do a much better job than we do at this.

Conclusion

In order to make sure that robots serve us and not the other way around, we need to make sure that we know when we talk to robots and when they talk to us. Asimov’s three laws don’t make sense if we cannot discern human from robot. Identifying robots will kill a core amplifier of spam on social media and elsewhere.

We know that Twitter design reads our blog and we hope that the Twitter leadership will appreciate the intention of this post. Moreover, we hope that they dare to mark bots even though their active user numbers might go down significantly. We believe that the earthquake that a sudden marking of all bots will cause will have a deep impact not just on Twitter but on all social media. The long-term effect will be an increase in trust. If this is done correctly, it will trigger a lot of bot and feed accounts to self-identify.

It is understood that bots are not only a problem on social media and they are not the only problem we have with Social Media, but they are a cheap amplifier of many issues we have today. Getting rid of them will mark a precarious blow for the tricksters and criminals that are trying to mess with our minds and lives and our future.

For those who didn’t know: The illustration is our riff on Peter Steiner’s Internet-famous cartoon from The New Yorker on July 5th, 1993.